Greater than 6 minutes, my friend!

An overview of the knowledge café workshops on #NMT and the future of #CAT tools at the #JIAMCATT2019 annual meeting

The 2019 JIAMCATT annual conference was held in Luxembourg from 13 to 15 May and its topic was “The shape of things to come: how technology and innovation are transforming the language profession”. As the topic of the conference suggested, all presentations focused on how technology is changing the professions in the fields of translation and terminology in particular, and of languages in general – a quite old and never-ending discussion that has been present in past JIAMCATT (and not only) meetings for quite some years.

Machine translation was, without any surprise, the protagonist of the conference. Several discussions showed that different MT solutions were implemented around various organisations over the past years. A lot of effort was made in implementing not only SMT solutions, but also the more recent NMT: the European Commission eTranslation NMT system is successfully up and running in all EU languages in many EU institutions and bodies, either as an additional tool for translators, or as a valuable asset for non-linguists who wish to get an overview (gist translation) of a text drafted in a language that they do not know. Whereas in the United Nations family, the WIPO has reached very good results with WIPO Translate, also widespread among UN bodies. These are custom solutions implemented by the internal language services of such organisations, but other smaller bodies also tested and implemented MT in their workflow by relying on market solutions offering customised MT engines (both SMT or NMT).

A very interesting and provocative presentation given by Jochem Hummel, the keynote speaker of this year JIAMCATT, entitled “Sun setting of CAT tools”, dominated the conference atmosphere. The discussion focused around different “modern” topics such as: the increased cognitive effort required in post-editing as a consequence of NMT implementations, elevating terminology from a concept‑based approach to a knowledge‑based approach, new skills required by the actors involved in the translation process and inclusion of experts in the translation workflow (fast, cheap, better (?)), the overload of information (reliable vs. unreliable) available out there seen as a possible disruptive asset, user interface re‑thinking, change management and the importance of setting up new business models in favour of the “overrated technology role”.

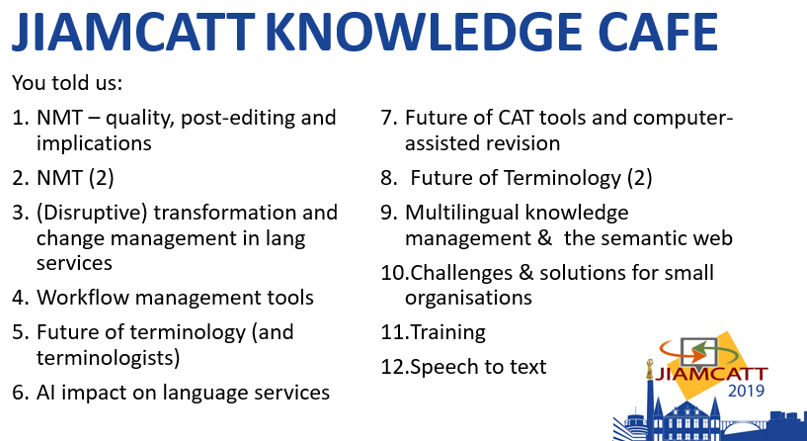

Knowledge café working groups

A series of workshops were organised during the first day and covered the following 12 topics:

Participants from UN and EU bodies, Universities and NGOs brainstormed about the main challenges of each one of the above-mentioned topics and proposed solutions.

NMT and Future of CAT tools

What came out from the NMT (1-2) and Future of CAT tools (7) working groups was that, nowadays, there is almost no translator that translates a segment from scratch without consulting an existing resource coming from:

- A translation memory (concordance option)

- Multilingual corpora (internal or publicly available resources)

- Terminology database (IATE, UNTERM, FAOTERM, etc.)

- Machine translation.

As it is understandable, this working method puts in question, once again, the role of the translator and her/his skills. It is not new that translators need to be trained on post-editing techniques and be aware of the difference between “post-editing” and “revision”: post-editing happens when a translator has to edit the output of a machine translation system, while a revision occurs when the translator revises the translation provided by a human translator. In this last case, the original translator (not the reviser) might have been in the position of using, as input for her/his translation, both translation memories and machine translation suggestions. With the increased quality provided by NMT, the question of who is the best profile needed to carry out a revision or a post-editing task comes into play: translators or subject matter experts?

Combination of TMs and MT proposals during pre-translation: new business model?

On the basis of the discussions of the working groups, it was clear for me that, the combination of these two “resources” (machine translation and translation memories suggestions) configures a business model that mixes the work of the translator who has to use different abilities, hence making different cognitive efforts: a suggestion coming from a translation memory needs “revision” (minor effort), while a suggestion coming from a machine translation system needs “post‑editing” (major effort (?)). In such a mixed approach (TMs plus MTs), it is challenging to trigger the switch between these two types of cognitive approaches and still make sure to stick to the quality requirements expressed by the translation requester (e.g. light/full post-editing).

TAUS, in its DQF Business Intelligence Bulletin Q1-2019 recently showed that in some cases translators feel more comfortable to revise fuzzy matches coming from a translation memory rather than a machine translation proposal. The reason behind this argument lies on the fact that a CAT tool, like SDL Trados Studio, shows the differences between what is to be translated and what is contained in the translation memory with track changes: this means that it is easier and faster for the translator to identify what needs to be changed. In the case of NMT proposals, there is no immediate way (except in the presence of grammatical, lexical and syntactical errors) to understand what can be wrong by simply reading them, especially now that NMT systems are generating perfect translations from a “fluency” point of view, but where the overall meaning of a sentence might be distorted.

These considerations make us wonder how such mixed scenarios fit into the (already existing) business models of language service providers and international organisations, (always laying a bit behind the private industry): what and how is a requester invoiced (weighted count for translation memory matches + x% reduction for not translated segments that come from an MT system? And who pays for the MT system creation, maintenance, etc.?) and what and how is a language service provider paid.

Quality of data and the wrong assumption of the origin of the translation proposals

It is known that data quality is essential to guarantee an optimal use of any kind of resources (e.g. terminology, translation memories, machine translation, corpora) and the overload of information can be disruptive for the translator. During the JIAMCATT, Joke Daems highlighted how, in an experiment, translators seemed to perform better in carrying out revision or post-editing tasks when they had the wrong assumption of what was the origin of the text: they were “tricked” and believed that they were carrying out a post-editing task when actually the translation was done by a human, and a revision task when the translation was done by an NMT system. Knowing that a translation is generated by an MT system still creates prejudices.

Future of CAT tools

With the integration of MT in translation workflows, it seems that CAT tools need to be rethought and adapted to new scenarios: they should, for instance, be accessible not only to linguists but also to non-linguists experts in a given domain of specialisation (some tools already provide this functionality). Topics such as “integration between several systems” or “better sharing of best‑practices”, popped up during the JIAMCATT working group discussions. Although these remain the hot topics around CAT-tools-related matters, I personally believe that they should not be considered any longer in the framework of “CAT tools future plans” as these matters are rather a constant that seems far from being solved/closed.

The introduction of NMT in the business model of a translation workflow, does not impact the co‑existence of CAT tools. Such tools are not only meant to provide translation memory matches. They are much more than this: they provide spell checker functionalities, auto-propagation options, terminology integration, QA checkers (not translated segments, inconsistent translations, repeated words, number inconsistencies, etc.), extended concordance functionalities (who does not consult Google to verify how to write a word, look for an expression within a specific context, etc.?), and much more.

The future of CAT tools should rather be shaped to accommodate the new business model requiring quicker access to translations and resources, different types of quality outputs (to be sold as a service), a better and targeted re-use of already existing translations (integration with CMS?), speech recognition functionalities and re-shaped Quality Assurance tools.

Artificial Intelligence applied to translation

During the JIAMCATT, discussions around the role of AI applied to NLP in general, and to translation and speech recognition applications in particular, were also tackled. In the context of NMT and CAT tools, it seems clear that additional efforts should be put in quality assurance functionalities able to automatically identify possible issues in NMT outputs in order to give additional added value to such tools and boost productivity.

To do that, Artificial Intelligence applications able to detect a possible change of meaning between the source and the translation should intervene. During the JIAMCATT, WIPO representatives presented automatic quality estimation methods able to identify low quality outputs produced by an NMT system. On the other hand, a representative of the CdT of the EU presented their current projects on speech recognition technologies aimed to automatise as much as possible speech to text processes to be applied to transcription and/or subtitling services, including the integration of automatic translation of subtitling.

*All views expressed here are my own.